BigQuery DataFrames (BigFrames) AI Forecast#

This notebook is adapted from https://github.com/googleapis/python-bigquery-dataframes/blob/main/notebooks/generative_ai/bq_dataframes_ai_forecast.ipynb to work in the Kaggle runtime. It introduces forecasting with GenAI Foundation Model with BigFrames AI.

Install the bigframes package and upgrade other packages that are already included in Kaggle but have versions incompatible with bigframes.

%pip install --upgrade bigframes google-cloud-automl google-cloud-translate google-ai-generativelanguage tensorflow

Important: restart the kernel by going to “Run -> Restart & clear cell outputs” before continuing.

Configure bigframes to use your GCP project. First, go to “Add-ons -> Google Cloud SDK” and click the “Attach” button. Then,

from kaggle_secrets import UserSecretsClient

user_secrets = UserSecretsClient()

user_credential = user_secrets.get_gcloud_credential()

user_secrets.set_tensorflow_credential(user_credential)

PROJECT = "swast-scratch" # replace with your project

import bigframes.pandas as bpd

bpd.options.bigquery.project = PROJECT

bpd.options.bigquery.ordering_mode = "partial" # Optional: partial ordering mode can accelerate executions and save costs

1. Create a BigFrames DataFrames from BigQuery public data.#

df = bpd.read_gbq("bigquery-public-data.san_francisco_bikeshare.bikeshare_trips")

df

/usr/local/lib/python3.11/dist-packages/bigframes/core/log_adapter.py:175: TimeTravelCacheWarning: Reading cached table from 2025-08-18 19:19:20.590271+00:00 to avoid

incompatibilies with previous reads of this table. To read the latest

version, set `use_cache=False` or close the current session with

Session.close() or bigframes.pandas.close_session().

return method(*args, **kwargs)

| trip_id | duration_sec | start_date | start_station_name | start_station_id | end_date | end_station_name | end_station_id | bike_number | zip_code | ... | c_subscription_type | start_station_latitude | start_station_longitude | end_station_latitude | end_station_longitude | member_birth_year | member_gender | bike_share_for_all_trip | start_station_geom | end_station_geom | |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| 0 | 201802092135083596 | 788 | 2018-02-09 21:35:08+00:00 | 10th Ave at E 15th St | 222 | 2018-02-09 21:48:17+00:00 | 10th Ave at E 15th St | 222 | 3596 | <NA> | ... | <NA> | 37.792714 | -122.24878 | 37.792714 | -122.24878 | 1984 | Male | Yes | POINT (-122.24878 37.79271) | POINT (-122.24878 37.79271) |

| 1 | 201708152357422491 | 965 | 2017-08-15 23:57:42+00:00 | 10th St at Fallon St | 201 | 2017-08-16 00:13:48+00:00 | 10th Ave at E 15th St | 222 | 2491 | <NA> | ... | <NA> | 37.797673 | -122.262997 | 37.792714 | -122.24878 | <NA> | <NA> | <NA> | POINT (-122.26300 37.79767) | POINT (-122.24878 37.79271) |

| 2 | 201802281657253632 | 560 | 2018-02-28 16:57:25+00:00 | 10th St at Fallon St | 201 | 2018-02-28 17:06:46+00:00 | 10th Ave at E 15th St | 222 | 3632 | <NA> | ... | <NA> | 37.797673 | -122.262997 | 37.792714 | -122.24878 | 1984 | Male | Yes | POINT (-122.26300 37.79767) | POINT (-122.24878 37.79271) |

| 3 | 201711170046091337 | 497 | 2017-11-17 00:46:09+00:00 | 10th St at Fallon St | 201 | 2017-11-17 00:54:26+00:00 | 10th Ave at E 15th St | 222 | 1337 | <NA> | ... | <NA> | 37.797673 | -122.262997 | 37.792714 | -122.24878 | <NA> | <NA> | <NA> | POINT (-122.26300 37.79767) | POINT (-122.24878 37.79271) |

| 4 | 201802201913231257 | 596 | 2018-02-20 19:13:23+00:00 | 10th St at Fallon St | 201 | 2018-02-20 19:23:19+00:00 | 10th Ave at E 15th St | 222 | 1257 | <NA> | ... | <NA> | 37.797673 | -122.262997 | 37.792714 | -122.24878 | 1984 | Male | Yes | POINT (-122.26300 37.79767) | POINT (-122.24878 37.79271) |

| 5 | 201708242325001279 | 1341 | 2017-08-24 23:25:00+00:00 | 10th St at Fallon St | 201 | 2017-08-24 23:47:22+00:00 | 10th Ave at E 15th St | 222 | 1279 | <NA> | ... | <NA> | 37.797673 | -122.262997 | 37.792714 | -122.24878 | 1969 | Male | <NA> | POINT (-122.26300 37.79767) | POINT (-122.24878 37.79271) |

| 6 | 201801161800473291 | 489 | 2018-01-16 18:00:47+00:00 | 10th St at Fallon St | 201 | 2018-01-16 18:08:56+00:00 | 10th Ave at E 15th St | 222 | 3291 | <NA> | ... | <NA> | 37.797673 | -122.262997 | 37.792714 | -122.24878 | 1984 | Male | Yes | POINT (-122.26300 37.79767) | POINT (-122.24878 37.79271) |

| 7 | 20180408155601183 | 1105 | 2018-04-08 15:56:01+00:00 | 13th St at Franklin St | 338 | 2018-04-08 16:14:26+00:00 | 10th Ave at E 15th St | 222 | 183 | <NA> | ... | <NA> | 37.803189 | -122.270579 | 37.792714 | -122.24878 | 1987 | Female | No | POINT (-122.27058 37.80319) | POINT (-122.24878 37.79271) |

| 8 | 201803141857032204 | 619 | 2018-03-14 18:57:03+00:00 | 13th St at Franklin St | 338 | 2018-03-14 19:07:23+00:00 | 10th Ave at E 15th St | 222 | 2204 | <NA> | ... | <NA> | 37.803189 | -122.270579 | 37.792714 | -122.24878 | 1982 | Other | No | POINT (-122.27058 37.80319) | POINT (-122.24878 37.79271) |

| 9 | 201708192053311490 | 743 | 2017-08-19 20:53:31+00:00 | 2nd Ave at E 18th St | 200 | 2017-08-19 21:05:54+00:00 | 10th Ave at E 15th St | 222 | 1490 | <NA> | ... | <NA> | 37.800214 | -122.25381 | 37.792714 | -122.24878 | <NA> | <NA> | <NA> | POINT (-122.25381 37.80021) | POINT (-122.24878 37.79271) |

| 10 | 201711181823281960 | 353 | 2017-11-18 18:23:28+00:00 | 2nd Ave at E 18th St | 200 | 2017-11-18 18:29:22+00:00 | 10th Ave at E 15th St | 222 | 1960 | <NA> | ... | <NA> | 37.800214 | -122.25381 | 37.792714 | -122.24878 | 1988 | Male | <NA> | POINT (-122.25381 37.80021) | POINT (-122.24878 37.79271) |

| 11 | 20170810204454839 | 1256 | 2017-08-10 20:44:54+00:00 | 2nd Ave at E 18th St | 200 | 2017-08-10 21:05:50+00:00 | 10th Ave at E 15th St | 222 | 839 | <NA> | ... | <NA> | 37.800214 | -122.25381 | 37.792714 | -122.24878 | <NA> | <NA> | <NA> | POINT (-122.25381 37.80021) | POINT (-122.24878 37.79271) |

| 12 | 201801171656553504 | 500 | 2018-01-17 16:56:55+00:00 | El Embarcadero at Grand Ave | 197 | 2018-01-17 17:05:16+00:00 | 10th Ave at E 15th St | 222 | 3504 | <NA> | ... | <NA> | 37.808848 | -122.24968 | 37.792714 | -122.24878 | 1987 | Male | No | POINT (-122.24968 37.80885) | POINT (-122.24878 37.79271) |

| 13 | 201801111613101305 | 858 | 2018-01-11 16:13:10+00:00 | Frank H Ogawa Plaza | 7 | 2018-01-11 16:27:28+00:00 | 10th Ave at E 15th St | 222 | 1305 | <NA> | ... | <NA> | 37.804562 | -122.271738 | 37.792714 | -122.24878 | 1984 | Male | Yes | POINT (-122.27174 37.80456) | POINT (-122.24878 37.79271) |

| 14 | 201802241826551215 | 1235 | 2018-02-24 18:26:55+00:00 | Frank H Ogawa Plaza | 7 | 2018-02-24 18:47:31+00:00 | 10th Ave at E 15th St | 222 | 1215 | <NA> | ... | <NA> | 37.804562 | -122.271738 | 37.792714 | -122.24878 | 1969 | Male | No | POINT (-122.27174 37.80456) | POINT (-122.24878 37.79271) |

| 15 | 201803091621483450 | 857 | 2018-03-09 16:21:48+00:00 | Frank H Ogawa Plaza | 7 | 2018-03-09 16:36:06+00:00 | 10th Ave at E 15th St | 222 | 3450 | <NA> | ... | <NA> | 37.804562 | -122.271738 | 37.792714 | -122.24878 | 1984 | Male | Yes | POINT (-122.27174 37.80456) | POINT (-122.24878 37.79271) |

| 16 | 201801021932232717 | 914 | 2018-01-02 19:32:23+00:00 | Frank H Ogawa Plaza | 7 | 2018-01-02 19:47:38+00:00 | 10th Ave at E 15th St | 222 | 2717 | <NA> | ... | <NA> | 37.804562 | -122.271738 | 37.792714 | -122.24878 | 1984 | Male | Yes | POINT (-122.27174 37.80456) | POINT (-122.24878 37.79271) |

| 17 | 201803161910283751 | 564 | 2018-03-16 19:10:28+00:00 | Frank H Ogawa Plaza | 7 | 2018-03-16 19:19:52+00:00 | 10th Ave at E 15th St | 222 | 3751 | <NA> | ... | <NA> | 37.804562 | -122.271738 | 37.792714 | -122.24878 | 1987 | Male | No | POINT (-122.27174 37.80456) | POINT (-122.24878 37.79271) |

| 18 | 20171212152403227 | 854 | 2017-12-12 15:24:03+00:00 | Frank H Ogawa Plaza | 7 | 2017-12-12 15:38:17+00:00 | 10th Ave at E 15th St | 222 | 227 | <NA> | ... | <NA> | 37.804562 | -122.271738 | 37.792714 | -122.24878 | 1984 | Male | <NA> | POINT (-122.27174 37.80456) | POINT (-122.24878 37.79271) |

| 19 | 201803131437033724 | 917 | 2018-03-13 14:37:03+00:00 | Grand Ave at Webster St | 181 | 2018-03-13 14:52:20+00:00 | 10th Ave at E 15th St | 222 | 3724 | <NA> | ... | <NA> | 37.811377 | -122.265192 | 37.792714 | -122.24878 | 1989 | Male | No | POINT (-122.26519 37.81138) | POINT (-122.24878 37.79271) |

| 20 | 201712061755593426 | 519 | 2017-12-06 17:55:59+00:00 | Lake Merritt BART Station | 163 | 2017-12-06 18:04:39+00:00 | 10th Ave at E 15th St | 222 | 3426 | <NA> | ... | <NA> | 37.79732 | -122.26532 | 37.792714 | -122.24878 | 1986 | Male | <NA> | POINT (-122.26532 37.79732) | POINT (-122.24878 37.79271) |

| 21 | 20180404210034451 | 366 | 2018-04-04 21:00:34+00:00 | Lake Merritt BART Station | 163 | 2018-04-04 21:06:41+00:00 | 10th Ave at E 15th St | 222 | 451 | <NA> | ... | <NA> | 37.79732 | -122.26532 | 37.792714 | -122.24878 | 1987 | Male | No | POINT (-122.26532 37.79732) | POINT (-122.24878 37.79271) |

| 22 | 201801231907161787 | 626 | 2018-01-23 19:07:16+00:00 | Lake Merritt BART Station | 163 | 2018-01-23 19:17:43+00:00 | 10th Ave at E 15th St | 222 | 1787 | <NA> | ... | <NA> | 37.79732 | -122.26532 | 37.792714 | -122.24878 | 1987 | Male | No | POINT (-122.26532 37.79732) | POINT (-122.24878 37.79271) |

| 23 | 201708271057061157 | 973 | 2017-08-27 10:57:06+00:00 | Lake Merritt BART Station | 163 | 2017-08-27 11:13:19+00:00 | 10th Ave at E 15th St | 222 | 1157 | <NA> | ... | <NA> | 37.79732 | -122.26532 | 37.792714 | -122.24878 | <NA> | <NA> | <NA> | POINT (-122.26532 37.79732) | POINT (-122.24878 37.79271) |

| 24 | 201709071348372074 | 11434 | 2017-09-07 13:48:37+00:00 | Lake Merritt BART Station | 163 | 2017-09-07 16:59:12+00:00 | 10th Ave at E 15th St | 222 | 2074 | <NA> | ... | <NA> | 37.79732 | -122.26532 | 37.792714 | -122.24878 | <NA> | <NA> | <NA> | POINT (-122.26532 37.79732) | POINT (-122.24878 37.79271) |

25 rows × 21 columns

2. Preprocess Data#

Only take the start_date after 2018 and the “Subscriber” category as input. start_date are truncated to each hour.

df = df[df["start_date"] >= "2018-01-01"]

df = df[df["subscriber_type"] == "Subscriber"]

df["trip_hour"] = df["start_date"].dt.floor("h")

df = df[["trip_hour", "trip_id"]]

Group and count each hour’s num of trips.

df_grouped = df.groupby("trip_hour").count()

df_grouped = df_grouped.reset_index().rename(columns={"trip_id": "num_trips"})

df_grouped

| trip_hour | num_trips | |

|---|---|---|

| 0 | 2018-01-01 00:00:00+00:00 | 20 |

| 1 | 2018-01-01 01:00:00+00:00 | 25 |

| 2 | 2018-01-01 02:00:00+00:00 | 13 |

| 3 | 2018-01-01 03:00:00+00:00 | 11 |

| 4 | 2018-01-01 05:00:00+00:00 | 4 |

| 5 | 2018-01-01 06:00:00+00:00 | 8 |

| 6 | 2018-01-01 07:00:00+00:00 | 8 |

| 7 | 2018-01-01 08:00:00+00:00 | 20 |

| 8 | 2018-01-01 09:00:00+00:00 | 30 |

| 9 | 2018-01-01 10:00:00+00:00 | 41 |

| 10 | 2018-01-01 11:00:00+00:00 | 45 |

| 11 | 2018-01-01 12:00:00+00:00 | 54 |

| 12 | 2018-01-01 13:00:00+00:00 | 57 |

| 13 | 2018-01-01 14:00:00+00:00 | 68 |

| 14 | 2018-01-01 15:00:00+00:00 | 86 |

| 15 | 2018-01-01 16:00:00+00:00 | 72 |

| 16 | 2018-01-01 17:00:00+00:00 | 72 |

| 17 | 2018-01-01 18:00:00+00:00 | 47 |

| 18 | 2018-01-01 19:00:00+00:00 | 32 |

| 19 | 2018-01-01 20:00:00+00:00 | 34 |

| 20 | 2018-01-01 21:00:00+00:00 | 27 |

| 21 | 2018-01-01 22:00:00+00:00 | 15 |

| 22 | 2018-01-01 23:00:00+00:00 | 6 |

| 23 | 2018-01-02 00:00:00+00:00 | 2 |

| 24 | 2018-01-02 01:00:00+00:00 | 1 |

25 rows × 2 columns

3. Make forecastings for next 1 week with DataFrames.ai.forecast API#

# Using all the data except the last week (2842-168) for training. And predict the last week (168).

result = df_grouped.head(2842-168).ai.forecast(timestamp_column="trip_hour", data_column="num_trips", horizon=168)

result

| forecast_timestamp | forecast_value | confidence_level | prediction_interval_lower_bound | prediction_interval_upper_bound | ai_forecast_status | |

|---|---|---|---|---|---|---|

| 0 | 2018-04-24 12:00:00+00:00 | 144.577728 | 0.95 | 120.01921 | 169.136247 | |

| 1 | 2018-04-25 00:00:00+00:00 | 54.215515 | 0.95 | 46.8394 | 61.591631 | |

| 2 | 2018-04-26 05:00:00+00:00 | 8.140533 | 0.95 | -14.613272 | 30.894339 | |

| 3 | 2018-04-26 14:00:00+00:00 | 198.744949 | 0.95 | 174.982268 | 222.50763 | |

| 4 | 2018-04-27 02:00:00+00:00 | 9.91806 | 0.95 | -26.749948 | 46.586069 | |

| 5 | 2018-04-29 03:00:00+00:00 | 32.063339 | 0.95 | -35.730978 | 99.857656 | |

| 6 | 2018-04-27 04:00:00+00:00 | 25.757111 | 0.95 | 8.178037 | 43.336184 | |

| 7 | 2018-04-30 06:00:00+00:00 | 89.808456 | 0.95 | 15.214961 | 164.401952 | |

| 8 | 2018-04-30 02:00:00+00:00 | -10.584175 | 0.95 | -60.772024 | 39.603674 | |

| 9 | 2018-04-30 05:00:00+00:00 | 18.118111 | 0.95 | -40.902133 | 77.138355 | |

| 10 | 2018-04-24 07:00:00+00:00 | 359.036957 | 0.95 | 250.880334 | 467.193579 | |

| 11 | 2018-04-25 10:00:00+00:00 | 227.272049 | 0.95 | 170.918819 | 283.625279 | |

| 12 | 2018-04-27 15:00:00+00:00 | 208.631363 | 0.95 | 188.977435 | 228.285291 | |

| 13 | 2018-04-25 13:00:00+00:00 | 159.799911 | 0.95 | 150.066363 | 169.53346 | |

| 14 | 2018-04-26 12:00:00+00:00 | 190.226944 | 0.95 | 177.898865 | 202.555023 | |

| 15 | 2018-04-24 04:00:00+00:00 | 11.162338 | 0.95 | -18.581041 | 40.905717 | |

| 16 | 2018-04-24 14:00:00+00:00 | 136.70816 | 0.95 | 134.165413 | 139.250907 | |

| 17 | 2018-04-28 21:00:00+00:00 | 65.308899 | 0.95 | 63.000915 | 67.616883 | |

| 18 | 2018-04-29 20:00:00+00:00 | 71.788849 | 0.95 | -2.49023 | 146.067928 | |

| 19 | 2018-04-30 15:00:00+00:00 | 142.560944 | 0.95 | 41.495553 | 243.626334 | |

| 20 | 2018-04-26 18:00:00+00:00 | 533.783813 | 0.95 | 412.068752 | 655.498875 | |

| 21 | 2018-04-28 03:00:00+00:00 | 25.379761 | 0.95 | 22.565752 | 28.193769 | |

| 22 | 2018-04-30 12:00:00+00:00 | 158.313385 | 0.95 | 79.466457 | 237.160313 | |

| 23 | 2018-04-25 07:00:00+00:00 | 358.756592 | 0.95 | 276.305603 | 441.207581 | |

| 24 | 2018-04-27 22:00:00+00:00 | 103.589096 | 0.95 | 94.45235 | 112.725842 |

25 rows × 6 columns

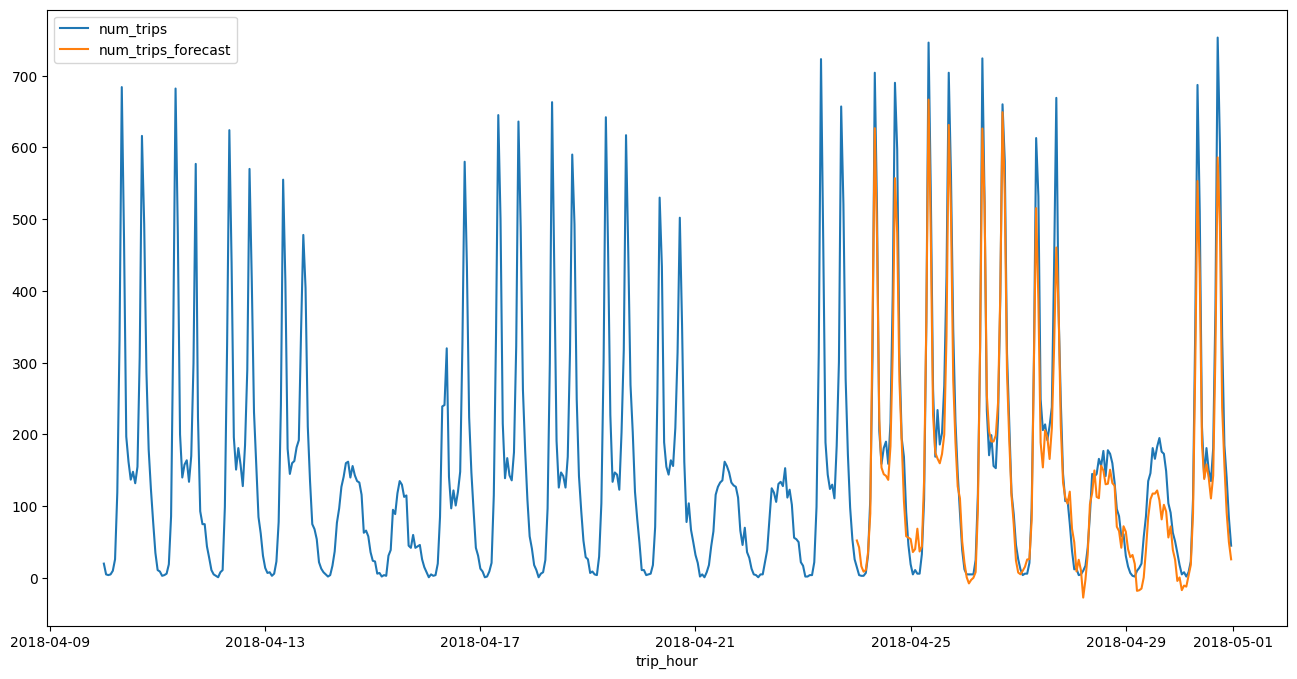

4. Process the raw result and draw a line plot along with the training data#

result = result.sort_values("forecast_timestamp")

result = result[["forecast_timestamp", "forecast_value"]]

result = result.rename(columns={"forecast_timestamp": "trip_hour", "forecast_value": "num_trips_forecast"})

df_all = bpd.concat([df_grouped, result])

df_all = df_all.tail(672) # 4 weeks

Plot a line chart and compare with the actual result.

df_all = df_all.set_index("trip_hour")

df_all.plot.line(figsize=(16, 8))

<Axes: xlabel='trip_hour'>